There is a class of failure in multi-node agentic systems that does not show up in evals, does not throw exceptions, and does not appear in your error logs. The agent calls a function that was never registered, gets no result, and continues. If your error handling is lenient - and most production systems are - execution moves forward with a silent gap where an action should have been.

We hit this at Dograh building multi-node voice agents. But the root cause is not specific to voice. It surfaces in any agent architecture where tool availability changes across execution stages and you pass raw conversation history between them.

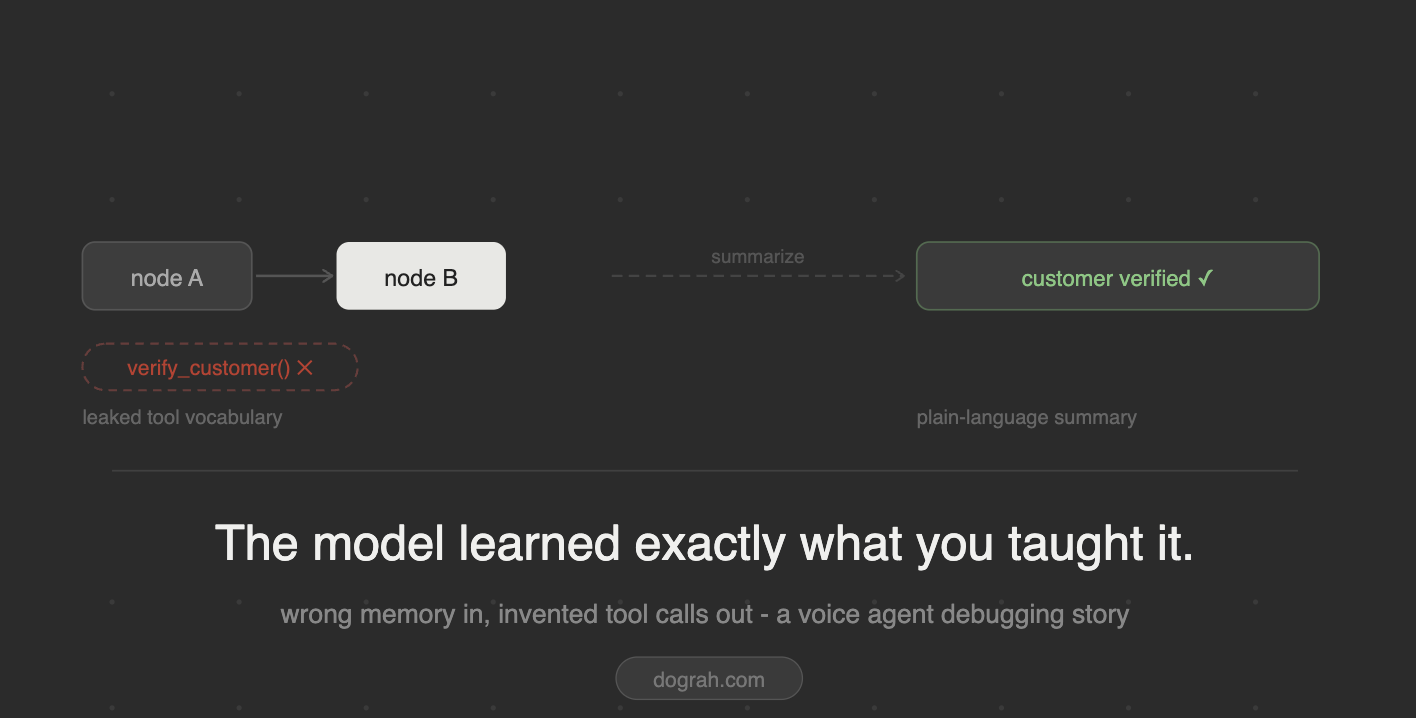

How the failure mode works

Most non-trivial agent workflows are a graph of nodes, each with a scoped tool set. Node A handles identity verification - it has access to verify_identity and fetch_account_summary. Node B handles scheduling - it only gets book_appointment and send_confirmation_sms. The scoping is intentional. You do not want your scheduling node touching account data.

When node A runs, its tool calls accumulate in history:

{

"role": "assistant",

"tool_calls": [{

"id": "c2f1a900-3b12-4e77-a821-df90c1e84b32",

"type": "function",

"function": {

"name": "verify_identity",

"arguments": "{\"customer_id\": \"u_4421\", \"method\": \"dob\"}"

}

}]

},

{

"role": "tool",

"tool_call_id": "c2f1a900-3b12-4e77-a821-df90c1e84b32",

"content": "{\"verified\": true, \"account_tier\": \"premium\"}"

},

{

"role": "assistant",

"tool_calls": [{

"id": "9de03b11-cc74-41f0-b392-7a5f620d91aa",

"type": "function",

"function": {

"name": "fetch_account_summary",

"arguments": "{\"customer_id\": \"u_4421\"}"

}

}]

},

{

"role": "tool",

"tool_call_id": "9de03b11-cc74-41f0-b392-7a5f620d91aa",

"content": "{\"plan\": \"premium_annual\", \"outstanding_balance\": 0, \"open_tickets\": 1}"

}

Node B then receives this history along with its own tool manifest:

"tools": [

{

"type": "function",

"function": {

"name": "book_appointment",

"description": "Book a service appointment for the customer",

"parameters": {

"type": "object",

"properties": {

"slot_id": { "type": "string", "description": "Available slot ID from the scheduling system" },

"customer_id": { "type": "string", "description": "Customer identifier" }

},

"required": ["slot_id", "customer_id"]

}

}

},

{

"type": "function",

"function": {

"name": "send_confirmation_sms",

"description": "Send appointment confirmation via SMS",

"parameters": {

"type": "object",

"properties": {

"customer_id": { "type": "string" },

"message": { "type": "string" }

},

"required": ["customer_id", "message"]

}

}

}

]

Here is where most explanations of this bug stop short. The common assumption is that the model simply re-calls verify_identity or fetch_account_summary because it saw them work in history. That does happen, but it is the less dangerous version of this failure.

The more dangerous version goes like this.

The model receives node B's context. It reads the history and sees verify_identity and fetch_account_summary being called successfully. It then reads its current tool manifest and notices - correctly - that those functions are not in the list. A well-behaved model should conclude: those tools are unavailable here. Do not call them.

But that is not what happens. Instead, the model draws the opposite inference: if tool calls worked in this conversation without appearing in the current declared list, then the declared list is not exhaustive or authoritative. It treats the tool manifest as a suggestion, not a constraint. And from that point it will invent entirely new function names it has never seen anywhere - fabricating whatever seems contextually appropriate for the task at hand.

You will see things like this in node B's output:

{

"role": "assistant",

"tool_calls": [{

"id": "f7c22b10-9a13-4d81-bc44-1e3308df72a4",

"type": "function",

"function": {

"name": "get_available_slots",

"arguments": "{\"customer_id\": \"u_4421\", \"preferred_date\": \"2025-04-10\"}"

}

}]

}

get_available_slots was never defined anywhere. It is not in node A's history. It is not in node B's manifest. The model invented it wholesale because the conversation history established a precedent: tool calls work here even outside the declared list. The prior node's history did not leak a specific function name into this call. It leaked something worse - the belief that the tool registry does not actually constrain what can be invoked.

The more capable the model, the worse this gets. A stronger model is a better in-context learner. It picks up the precedent faster, generalises it more aggressively, and produces more plausible-looking invented function signatures that are harder to catch without explicit registry diffing.

Why evals miss it

Standard node evals test in isolation. You construct a clean synthetic context for node B with no prior node history, verify tool call behaviour, and everything looks correct. The bug only surfaces in end-to-end runs where real accumulated history from node A sits in the context window. By the time you see it in production it has been misfiring across a percentage of executions since deployment - and because the invented function names look plausible, they often pass a casual log review.

Mitigation 1 - post-generation registry validation

Intercept model output before execution, diff the generated tool name against the registered list for the current node, and reject anything that does not match:

async function executeNode(node, messages) {

const response = await llm.complete({

messages,

tools: node.registeredTools

});

const toolCall = response.tool_calls?.[0];

if (!toolCall) return response;

const isRegistered = node.registeredTools

.some(t => t.function.name === toolCall.function.name);

if (!isRegistered) {

observability.log({

event: "hallucinated_tool_call",

node: node.id,

attempted: toolCall.function.name,

available: node.registeredTools.map(t => t.function.name)

});

return retryWithConstraint(node, messages, toolCall.function.name);

}

return executeTool(toolCall);

}

The observability log is the most valuable part. Aggregate hallucinated_tool_call events across nodes over time and you get a precise map of which node transitions are leaking vocabulary and which are triggering the "manifest is not authoritative" inference. That signal exists nowhere else in your stack - without it you are looking at function execution failures in your logs with no way to distinguish a legitimate failed call from an invented one.

The problem is that interception is reactive. The model already made a wrong decision before you caught it. In latency-sensitive applications you are paying for a retry cycle on a percentage of calls, and you have not addressed the source of the inference that caused it.

Mitigation 2 - history summarization at node boundaries

The root cause is that raw tool call history crosses node boundaries carrying two things the downstream model should never see: function vocabulary, and evidence that tool calls succeed outside the declared manifest. Fix both at the source. When a node completes, summarize its execution into plain language before passing anything forward:

async function summarizeNodeHistory(nodeId, rawHistory) {

const toolExecutions = rawHistory

.filter(m => m.role === "assistant" && m.tool_calls)

.map(m => ({

tool: m.tool_calls[0].function.name,

args: JSON.parse(m.tool_calls[0].function.arguments),

result: JSON.parse(

rawHistory.find(r =>

r.tool_call_id === m.tool_calls[0].id

)?.content ?? "{}"

)

}));

const summary = await llm.complete({

messages: [{

role: "user",

content: `Summarize what happened in this agent node as 1-2 plain English sentences.

State outcomes only. Do not mention function names, argument keys, or any system internals.

Tool executions: ${JSON.stringify(toolExecutions)}`

}]

});

return {

role: "system",

content: `[Node ${nodeId} completed]: ${summary.content}`

};

}

The downstream node now receives:

[Node verify]: Customer identity confirmed. Account is active on a premium plan with no outstanding balance.

Instead of two tool call and result pairs carrying verify_identity, fetch_account_summary, their full argument shapes, and their JSON responses. No function names. No schema. No evidence of prior tool calls succeeding outside a manifest. The model reads prior node outcomes as facts about the world. It has no basis to infer that the tool registry is non-authoritative because it has never seen a tool call that exists outside the current manifest. When it needs to act, it looks at what is registered and works strictly from that.

The full pipeline:

async function runWorkflow(nodes, initialMessages) {

let context = initialMessages;

for (const node of nodes) {

const rawHistory = await executeNode(node, context);

const summary = await summarizeNodeHistory(node.id, rawHistory);

context = [

...context.filter(m => m.role === "system"),

summary

];

}

}

The context rebuild is the critical step. You strip all assistant and tool messages from prior nodes and replace them with the plain-language summary. System messages stay for continuity. Everything carrying tool vocabulary - and crucially, everything that establishes the precedent of tool calls working - gets dropped at the boundary.

What this costs

One additional LLM call per node transition for the summarizer. In practice the prompt is small - a compact JSON of tool executions in, one or two sentences out. The token compression on downstream nodes more than offsets this in any workflow beyond three nodes, since you are replacing verbose nested JSON history with a single line per prior node. For voice agents specifically, node transitions happen between conversation turns rather than mid-turn, so the added latency is absorbed in the natural pause.

Running both in production

At Dograh we run summarization as the primary layer and registry validation as a monitoring backstop. When a hallucinated call does slip through - typically because the summarizer produced a poorly-formed output that leaked a function name, or summarized an outcome in a way that implied a tool call could be re-used - the interception layer catches it and logs it. The hallucinated_tool_call event rate in production is the metric we track. It trends toward zero as summarization prompt quality improves. When it spikes, it points precisely to which node transition has a summarization gap, which is a much faster debug loop than reconstructing the failure from raw execution logs.

The underlying principle

The context window is an active input, not a neutral log. Everything you pass across a node boundary shapes the model's understanding of two things: what happened, and what it is capable of doing. Raw tool call history teaches it function names, argument shapes, and - most dangerously - that tool calls can succeed outside the declared manifest. In a multi-node workflow with differing tool sets across nodes, every transition that passes raw history is creating the conditions for this failure.

Summarize at boundaries. Strip the vocabulary and the precedent together. Validate as a backstop and use the signal to improve summarization quality over time. The fix is not clever, but understanding the actual mechanism - that the model is not just re-calling old tools but concluding the manifest is non-authoritative - is what gets you to the right solution rather than patching symptoms downstream.